Automotive AI: Face tracking and analysis in cars

Everything is about user experience these days and cars are no exception. Consumers want everything to be highly efficient, tailored to their needs, and easily accessible with the least effort. The use of artificial intelligence in cars can help meet those needs and build a transportation device that does significantly more than take its passengers from point A to point B.

The market for artificial intelligence in the automotive industry is predicted to surpass $12 billion by 2026, proving that automotive AI is big business indeed. Some AI-powered features are already available in consumer vehicles, while others are still being tested and developed.

The role of artificial intelligence in cars

Artificial intelligence systems keep taking on new interesting roles that simplify and enhance the driving experience. Although we still can’t take a nap behind the wheel or immerse ourselves in a movie, the latest car models can still carry out a range of tasks on their own.

Generally, we can classify automotive AI into two main (yet complimentary) categories – driving automation and in-cabin sensing.

Driving automation

We are heading toward self-driving cars that can make decisions and control operations autonomously. However, you have to walk before you can run and it’s no different when it comes to AI in cars.

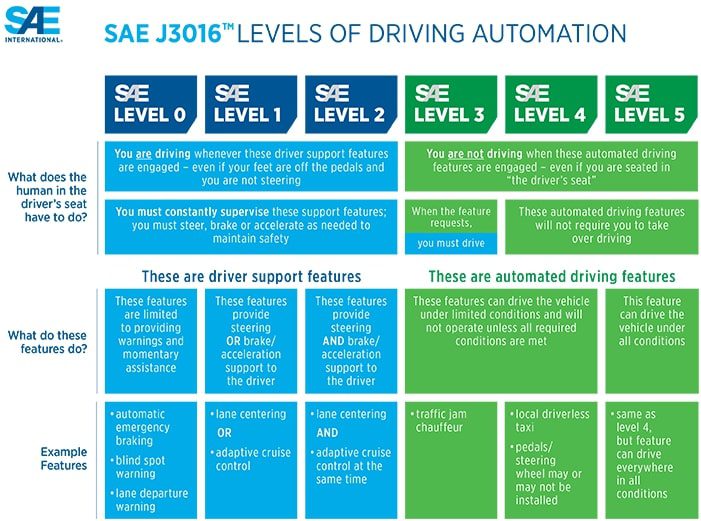

From driver support to full automation, we can classify the evolution of driving automation into 5 levels, based on the level of control that AI has over the vehicle.

- LEVEL 1: Driver assistance

The first level of driving automation includes driver support functions such as adaptive cruise control or park assist. In other words, AI supports the driver without taking full control. This provides additional safety and comfort, which helps warm the consumers up to the idea of having AI in the co-pilot seat. Consumer vehicles that classify as level 1 are the most common on roads today.

- LEVEL 2: Partial automation

A Level 2 vehicle comes with an Advanced Driver Assistance System or ADAS. At this phase, the car can steer, accelerate and brake by itself. However, a human driver still has to stay alert and keep their hands on the wheel. In practice, this translates to functions such as adaptive cruise control, active lane-keep assist, automatic emergency braking, etc. Assist technologies have to be coordinated at this point.

Examples of Level 2 vehicles include Tesla Autopilot, Mercedes-Benz Distronic Plus, Cadillac’s Super Cruise, and Nissan’s ProPilot system.

- LEVEL 3: Conditional automation

At level 3, the car starts doing most of the heavy lifting. It’s able to drive autonomously under certain traffic conditions, such as motorways. The person in the driver’s seat can engage in other activities, such as watching a movie or using their phone. However, they must be able to take over quickly if the car prompts them to do so. To make sure the driver stays sufficiently alert, a driver monitoring system is a must.

Audi A8 was the first car to claim level 3 autonomy. Since driving in an “eyes-off” state is still a regulatory gray area, its Traffic Jam Pilot still needs to be approved for sale in many countries.

- LEVEL 4: High automation

Level 4 vehicles can operate in self-driving mode, even in complex situations. However, traditional control devices such as a steering wheel are still available. They allow the human driver to manually override the system if necessary – for example, in an environment it wasn’t designed for.

An example of a level 4 vehicle is Google’s Waymo project. For several years, Waymo has been experimenting with autonomous taxi service, but the rides typically included a safety human driver.

- LEVEL 5: Full automation

At this stage, driving is completely automated under all conditions. The car doesn’t require any intervention from humans, so there is no need for a steering wheel or pedals. Everyone in the cabin is simply a passenger. They can sit back and relax while the vehicle handles the driving all by itself.

Levels 3-5 are still in the testing phase. Since driving often includes unexpected and unpredictable situations, it requires more than just following a set of rules. Just as human drivers keep learning and adapting with experience, self-driving cars should be able to do the same before we can fully rely on them.

In-cabin sensing

Self-driving cars may currently be the priority for the automotive industry, but AI can do more than drive. It can help keep us safe, comfortable and entertained in the cabin as well.

Currently, most AI-powered solutions in cars are built around advanced driver-assistance systems (ADAS). However, to truly assist the driver, it’s important to monitor what is happening inside the vehicle as well. In-cabin sensing solutions support ADAS by gathering valuable information about the driver and the passengers. The goal is to personalize the in-cabin environment, various safety features, and entertainment options in accordance with the actual needs of the passengers.

Driver monitoring system

Despite the advances in AI, human drivers still have the main responsibility for getting the vehicle to their destination safely. This, of course, comes with the risk of human error – the cause of most car accidents. This is where driver monitoring systems come in.

A driver monitoring system, or DMS for short, makes sure the driver stays focused on the road by monitoring and analyzing the driver’s face in real time. Valuable information gathered from the human face can help understand the driver’s state and mood. This enables the system to address safety concerns on time and optimize the in-cabin experience.

DMS uses a range of computer vision technologies, including head tracking, eye and gaze tracking, emotion recognition, and more. This allows the system to determine the driver’s viewing direction, attention level, current emotions, and more. Such data is used to identify potential risks in order to suggest (or, in some cases, take) appropriate actions.

For example, if the driver is tired or intoxicated, it typically manifests itself with the head dropping down, closed or barely-open eyes, and sudden head movements. Once the system detects drowsiness, it can warn the driver to pay attention to the road, suggest taking a break, slow the car down, and more.

Furthermore, emotion recognition can be used to detect “undesirable” emotions such as anger. Since anger can lead to road rage, the system can intervene on time by offering alternative routes, slowing the car down, etc.

Driver biometrics is also increasingly used in cars. Combined with face tracking and face analysis, face recognition can help create a meaningful driver profile that can help bring the entire in-cabin experience to a higher level.

Of course, the obvious use case is identifying the driver to prevent car theft. If an unauthorized person attempts to start the car, the system can notify the owner or block the car from starting. Furthermore, if the driver switches places with a passenger during the ride, the system can intervene. This helps prevent theft and gives owners better control of their cars.

Besides keeping the driver safe and preventing theft, face recognition enables a better, more personalized in-cabin experience for all car occupants. Let’s say that the car is shared by an entire family. In that case, the system can remember the preferred settings of each family member and restore them automatically. For example, the car can automatically adjust the seat and mirrors, turn on the driver’s favorite music, set the preferred temperature, and more.

Car owners can also set up permissions or restrictions for other people. For example, they could set up certain restrictions for their children learning to drive, such as a time or speed limit.

Occupant monitoring

As we get closer to autonomous and semi-autonomous cars, the focus of in-cabin monitoring expands from the driver to the entire cabin. To make each ride safe and enjoyable for every passenger in the vehicle, it’s crucial for the car to understand the passengers’ moods and states. This way, the system can respond to their needs in real time.

The passenger monitoring system can, similarly to DMS, recognize the passengers’ emotions, estimate their age and gender, identify them, and more. Such data can be combined and used to better understand the in-cabin environment and properly react to specific states and situations. It can, for example, determine how many passengers there are in the back seat, who they are, what their relative position is, how they are feeling, and more.

Such data also allows the system to adjust the car’s safety features such as seatbelts or air-bag to each individual passenger based on their age, gender, and relative position.

The most vulnerable passengers – children – have a lot to gain when it comes to safety, too. For example, when the system detects a child, it can remind the driver to check if all the required safety measures, such as a child safety belt, are in place before starting the car. It can also alert the driver about an unattended child or pet to prevent hot car fatalities.

Furthermore, age estimation can also prevent minors from starting the car while unattended (or at all). Combined with face recognition, the system can go one step further and, for example, recognize minors with a driving license and allow them to start the car when there is an adult present.

Finally, passenger monitoring can help create a more comfortable environment, too. This includes adjusting the temperature, music, lighting, seat adjustments, and other environmental controls according to the number of passengers, their demographics, or even current emotional states.

For example, if the passengers get into a heated argument, the system can suggest soothing music. If they seem tired, it can adjust the seats so that they can take a nap more comfortably. If there’s a kid in the car, it can block content that is not kid-friendly. And so on.

A passenger monitoring system can also make use of face recognition to “remember” the passengers’ preferences. That way, the car can automatically personalize its environment as soon as the “recognized” passengers enter the cabin.

Your partner on the road to automated driving

Autonomous driving is an unquestionable goal of the intense race run by a number of large companies. Instead of being behind the wheel, future generations might get to enjoy the view of the landscape and digital screens while riding more safely and comfortably than ever.

Driver monitoring systems play a huge role in the transition from manual to semi-autonomous and fully autonomous vehicles. To truly minimize the work for the human driver and maximize safety at the same time, they need to be based on reliable software.

At Visage Technologies, we’ve created face analysis technology that is extremely lightweight, trained for challenging conditions, and fully customizable. As a technology partner with almost two decades of experience in computer vision and machine learning, we can help you develop a custom, comprehensive solution. To request a free evaluation license or discuss a project, get in touch.

Explore proven computer vision technology

Discuss your project with our experts and start your free trial today.